Background:

Every Canary deployment is made up of at least two pieces. Canaries (hardware, VM or Cloud) that then report in to the customer’s dedicated console hosted in EC2. We’ve gone to great lengths to make sure that the code and infrastructure we run is secure, and we ensure that any activity on these servers that isn’t expected, is raised in the form of an alert.

A few weeks ago, this real-time auditing activity tripped an alert on a development server. Servers are either built as production servers, which have been tested and effectively have a frozen footprint, or as development servers which are used by our developers for testing. The anomalous activity triggered our incident response process, and the response team swung into action.

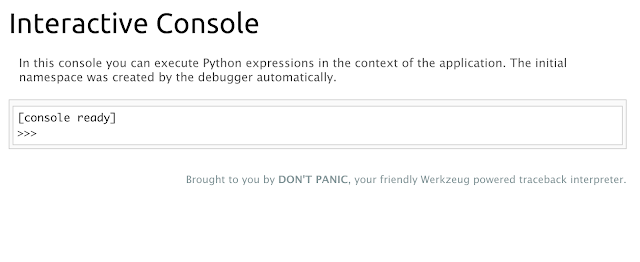

A quick check on the server showed that it was owned by an automated tool blasting through the AWS address space looking for a bunch of simple misconfigs and attack vectors. On this dev server, it found a debug interface at the path /console. This debug console was foundational to the framework and was automatically included whenever the framework’s debug flag was enabled. Importantly, it’s served from deep within the framework, and introspecting the application’s internal routes didn’t show /console.

What a gift! The developer working on this console enabled debug mode without realizing its full implications, and the attacker’s script found it in time. The fault here was ours, we turned on debug mode in Flask, but our surprise came from the fact that we never expected our webserver to serve up pages we didn’t know about.

The immediate fix was to disable the debug flag and reboot the server, which killed the access. (We subsequently tore down all our dev servers, not because of signs of compromise, but because our tooling makes it trivial to launch new ones.) However we wanted to examine the pattern a little more closely, to see if we could reduce our unmitigatable surprises. If /console was present, what other surprises await us now, or in the future with new developments happening?

So we started looking at creating an whitelist generator for NGINX, which is the web server we rely on. What we had in mind was a stand-alone tool that would coax NGINX to only serve documents from known paths, with minimal effort and minimal impact to an existing setup.

1) What we tried that didn’t work

NGINX has a module which allows one to embed Lua scripts inside the config file. We explored this (because we really wanted an opportunity to play with Lua) but ultimately rejected it as Lua support isn’t part of most default NGINX packages. We’d have to build the NGINX package from scratch, which would create an additional operational burden, and so fails our minimal effort and impact goals. We then explored the custom-built NGINX Javascript module referred to as njs, which is a scripting module developed and supported by NGINX themselves. It installs on top of existing NGINX setups and is super cool and interesting, but also turned out to be too limited for our needs. (It essentially prevented us from being able to call out and inspect the Flask setup to learn about valid routes).

2) What we currently do

tl;dr

- Grab nginx_flaskapp_whitelister from our Github account;

- Run nginx_flaskapp_whitelister to generate a new include.whitelist file

- Include this file into your current NGINX config.

The skinny:

A typical Flask app has a url_map object which holds all of the routes used in the application. Assume we have a Flask app, with defined routes that look something like this:

Our url_map will look like this:

Now NGINX has a concept of whitelisting routes, using what they refer to as “location directives”. A simple pseudo-configuration will look something like this:

So the basic lookup sequence of NGINX, to determine what to serve up for a requested path is as follows:

- NGINX looks for exact matching routes (defined with ‘=‘),

- It turns to the longest matching routes defined with prefixes (modifiers such as ‘^~’)

- It will turn to its default lookup method as regular expression matches to the longest matching route.

Thus by specifying locations using the ’=‘ and ‘^~’ modifiers, you are able to override the natural behaviour of route lookups.

We make use of this by extracting all rules/routes defined for our app (by grabbing it from the apps associated url_map object) and mangle this into a neatly bundled, bite-size chunk for NGINX. We then fetch the current NGINX config describing the ‘/’ route of the running server (most likely serving the allowed current endpoints).

We then create a separate include.whitelist file with the following NGINX configuration:

Including this whitelist file ahead of the location directive definitions ensures that the config written by the tool will take priority over possible conflicts without overriding or ignoring additional existing configuration oddities in the current setup.

So with a simple git clone and install:

…and a run of the tool:

…you are sure that even if a dev accidentally enables ‘/console’ again, it won’t be served, as you do not explicitly allow it. Instead you will be served up an NGINX 404 page (or if so defined in your NGINX configuration file, a custom 404 page – like we do!):

Example: for implementing the nginx_flaskapp_whitelister for your Flask application called app, that is defined in the file /module/flask.py and run from /path/to/python/virtualenv; you would run the following command:

3) How it fits into our pipeline

We use SaltStack for easing our configuration management, with their event-driven orchestration and automation through the use of configuration files referred to as ‘recipes’. Including our NGINX Flask App Whitelisting tool into our Salt recipes was quite simple: a recipe ensures the tool is present and installed, and the tool is run after the NGINX config file is deployed, but before the NGINX process is started.

4) Where you can find it

Epilogue

As trite as it sounds, nothing beats solid security design and multiple layers of detection. We were able to discover the compromise within minutes because all SYSCALLS on our servers are audited and exceptions are alerted on. The deployed architecture means that a single server compromise doesn’t leak anything to an attacker to let her target other servers and she has no preferential access because of her compromise. Now, with all of our servers running nginx_flaskapp_whitelister, there’s less chance of them surprising us too.

Edit: Also check out https://blog.eutopian.io/elephant-proofing-your-web-servers/ by @nickdothutton