Today we've released Package Proxy, our internal solution to the software supplychainpocalypse. …

Blog Posts

Thinkst Canary now integrates with Google Security Operations SOAR, giving security teams a straightforward way to work high-confidence Canary alerts in the environment they are already used to. Canary incidents can be ingested into Google SecOps SOAR as cases, where analysts can review alert and event context (and acknowledge incidents when the work is done). …

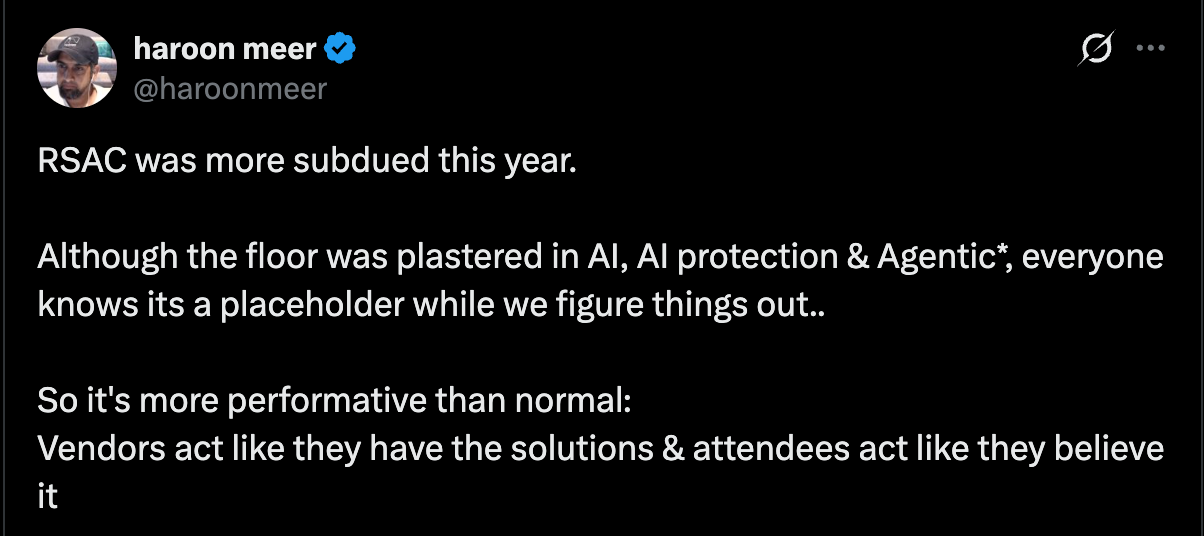

Why is the RSAC floor so dominated by promises that can’t be kept? Because incentives.. …

Last week we concluded the deal to acquire 100% of UK-based DeceptIQ. We welcome them to the flock. DeceptIQ is built by red-teamers with a deep desire to turn the tables on attackers. In our ten years of doing Canary, we’ve never seen such a strong natural alignment. We are super excited to write future chapters (to help defenders win) together. …

Introduction Canarytokens have proved themselves over the last decade as an easy-to-deploy breach detection tool. Our free Canarytokens service has supported AWS API keys since 2017. The concept is straightforward: you sprinkle decoy API keys in your code repos / Lambda configurations / virtual machine disks; when the credentials are used by attackers, you’ll get an alert in your mailbox. They make an excellent (and simple) way to identify malicious actors inside your infrastructure, in the early stages of the …

Like many in the industry, we are mentally preparing for the trip out to Las Vegas for the US’s crowning trio of big security conferences: BSidesLV, Black Hat USA, and DEF CON. Every year tens of thousands make the annual pilgrimage to the “Hacker Summer Camp” trifecta to see friends, learn from the smorgasbord of tasks and trainings, and share their knowledge far and wide. Each year we at the ThinkstScapes HQ find great content worth highlighting from these longstanding …

You’re moments away from finishing a feature you’ve been working on for the last two weeks when you get a Slack notification that the frontend test pipeline has failed for the 824th time that year. It’s the same handful of flaky tests that fail whenever there’s a half-moon. You make a note to fix these tests and get back to finishing that feature. We were in this situation and asked ourselves whether we enjoyed building and maintaining our frontend test …

[ This is a lightly edited internal post we’ve made public.] Last week we had booths at DevConf Joburg, and DevConf Cape Town. They’re two ZA events run by the same crew with the same speakers, two days and 1400kms apart. The organisers set a bar in ZA for putting on polished and well-run events. Where the average event is in an old venue with limited food and chaotic organisation, DevConf is punctual, classy, and efficient. Francois & Victor (Jhb), and Leighton …

At Thinkst, we build tools to make attackers’ lives harder and defenders’ lives easier. Our latest Canarytoken does exactly that—introducing the SAML IdP App Canarytoken (already available on canarytokens.org, but now available on customer Consoles too!) Where our Fake App Canarytokens for iOS and Android detect badness at the device level, SAML IdP App Canarytokens help at the identity level. Organisations rely on Single Sign-On (SSO) to manage authentication across their cloud applications. Attackers know this and target identity providers (IdPs) …

Introduction A counterintuitive truth is that great products are defined by both the features they include, as well as those they don’t. We spend a lot of time pondering potential new features for Thinkst Canary to make sure the added value exceeds the inevitable cognitive complexity that new features (or new UX elements) bring. This post will dive into a recent Labs research effort that we ended up leaving on the cutting room floor. Background We are always on the …